Generative AI is empowering people to express themselves and interact with the world in ways that were not possible even just a few months ago. It is a technology exploding with possibility. At Kantar, we’ve discussed what Large Language Models (LLMs) could mean for market research, now we consider the potential for multimedia, such as images and video. We explore what Generative AI could mean for visual creative and concept development - the potential to help companies craft ads or new concepts that more deeply resonate with people by tapping into their needs in new ways.

One of the most common questions raised about AI is, can technology really replace what is usually considered to be the uniquely human ability to be creative? We would say the answer is partly yes and partly no:

- Yes, in the sense that Generative AI has advanced to the point where anyone can, with a few simple prompts, generate what looks like amazing art, using tools such as Midjourney, DALL-E, Canva text-to-image, Fliki, Synthesia and Peech AI (to name just a few).

- But also no, as Generative AI is built on a vast human database containing the work of real artists and their styles. This enables the technology to do what it does, but human artistry has not been replaced. Strategically, prompting and moderating the outputs of AI systems will become a prominent role in the months and years to come.

But asking if technology can ‘replace’ a person may be the wrong question. We argue that humans and technology, when combined optimally, result in a better product.

AI in the creative development process

AI has a long history in the creative process - it’s not new. At Kantar, we help companies with solutions like Link AI to test creative variations in bulk and make rapid, confident decisions about which ads to run, and NeedScope AI to analyse a brand’s imagery and video to understand the degree to which they are in alignment with the brand’s targeted emotive positioning across touchpoints. We are also in the process of enhancing our Concept eValuate product to use AI to automatically identify and optimise innovation concepts with the most top-line growth. These products are informed by high-quality training data – Link AI is built on a database of over 250,000 campaigns and 35 million human interactions.

Using image and video AI in creative and concept testing

1. Concept evaluation

Imagine spending a lot of effort creating an ad or product concept that is likely to underperform. With Generative AI you could make near-instant changes to the original copy and re-test those changes to see if they outperform the original. The text in the concept could be used as a Generative AI prompt to create a more compelling image that captures the essence of the concept. This could be an iterative process where both the text and image(s) are refined together with oversight from a human analyst.

We tested this approach on a real product concept for a ready-made ingredients box delivery service. First, we used GPT-4 to optimise the text, which gave us a higher predicted performance than the original. We then used DALL-E to optimise the concept imagery and found the combination of optimised text and imagery, reviewed by a human achieved the highest performance.

2. Video summary

Our Link survey asks respondents to summarise an ad in their own words. This is valuable to media agencies who want to know if the ad conveys the intended message. If ads can be accurately summarised at scale, without asking respondents for input, they can be catalogued and subject to a meta-analysis on best practice from the most successful ads (or themes that are common to underperforming ads). Generative AI, when combined with Optical Character Recognition (OCR) - the process of recognising text from images - can help achieve high-quality video summarisation faster with fewer human inputs. This is again something we are actively pursuing at Kantar.

3. Creative shaping

While Generative AI tools are built for images, you can split a video into multiple images, pass them through image AI tools to edit them appropriately and stitch the results back together. Such edits could range from relatively simple (changing the overall colour palette of the ad) to very sophisticated (replacing an actor with a celebrity). This would drastically scale up the number of combinations that could be produced, tested, and re-tested in less time, with less effort. Human creativity supercharged by Generative AI technology. This is an ambitious idea with the current state of Generative AI technology, but it is something we are already testing and are excited about.

So, we can get rid of humans, right?

Definitely not. For a start, machines cannot understand the nuance of a brand’s identity and personality, which is a crucial component of creating content that fits a brand’s commercial and community objectives. The art of storytelling, of stitching together a compelling and authentic narrative, remains a distinctly human one. As is the art of using emotion appropriately to elicit the desired responses. A machine cannot make the right cultural decisions, consider principles of diversity and inclusivity, interrogate a brand’s purpose, etc.

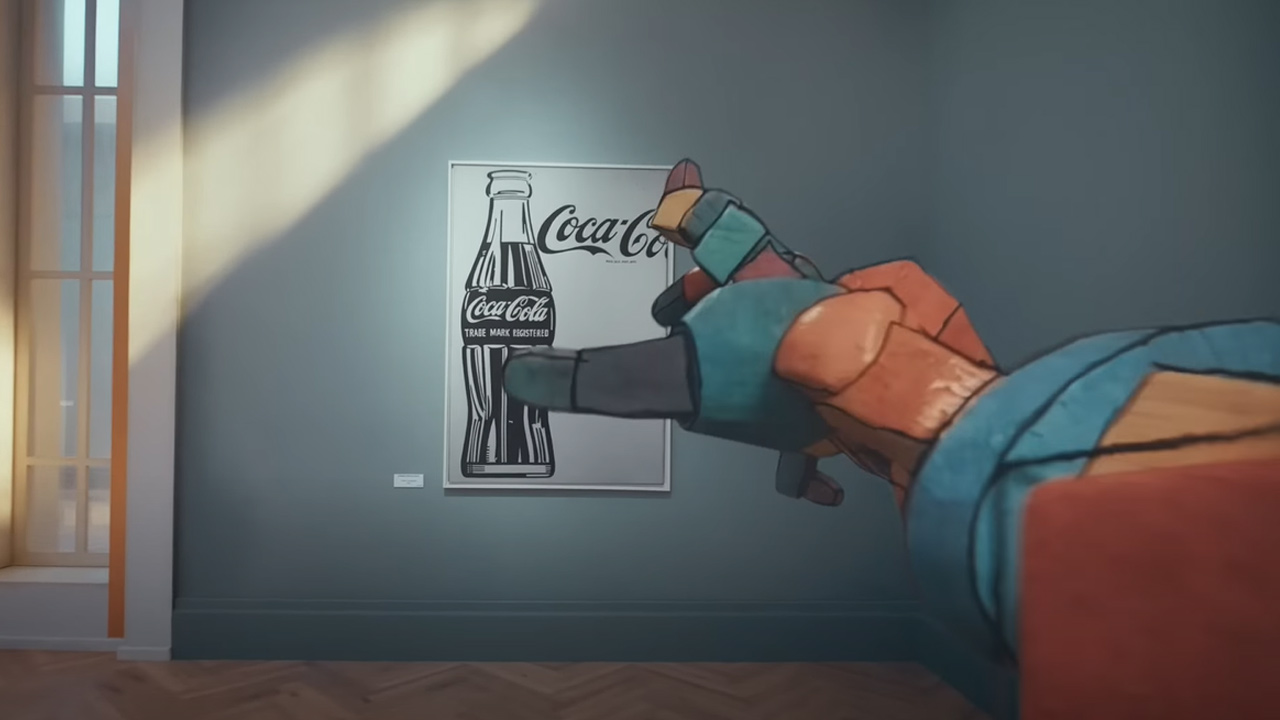

And of course, things can just go wrong. Brands like Mint Mobile have humorously played with this concept: in January 2023 the company released an ad ‘testing’ ChatGPT’s ability to write advertising scripts. The technology’s limitations are played on, such as taking instructions literally, or not understanding the nuance of humour. The point is well made that humans need to craft the story. Coca-Cola understood this when creating their recent ad, which integrates AI smartly with live action and other digital effects, with stunning results.

Coca-Cola Masterpiece

So, our jobs remain safe. There is no doubt that the notion of creativity is evolving in a way that is expanding our own human ability to be creative, and that should excite us!

What are the risks?

Generative AI tools have made headlines recently, a notable example being when an AI-generated image won a prestigious photography contest and the submitter rejected the award to make a statement about the role of AI in art.

As with any new technology (particularly one as culturally transformative as this) there are risks, limitations and areas of uncertainty. In our previous article we covered some of the overarching issues, but let’s consider a few points specific to images and video:

- The IP status of AI-generated content is debatable, with no guaranteed right to exclusive use and the potential to infringe third-party IP rights (depending on the training data used). Each case requires an assessment before use for commercial purposes like a marketing campaign.

- There is potential to misuse someone’s likeness. The misappropriation of elements deeply connected to an individual’s identity (name, image, likeness etc.) may infringe their publicity rights or, depending on the country, personality rights with a similar scope.

- Collection and processing of images and video data need to comply with data protection and privacy regulations like GDPR and similar laws around the world.

- New AI laws may restrict certain uses and require rigorous risk assessments in others e.g., emerging biometric laws that will govern how we use data such as facial coding.

Much of this legal groundwork is being explored by governments. There are also pending lawsuits involving this technology, the results of which will impact legislation. However, it will take time for new legal frameworks to become clear. In the meantime, it will be partly up to companies to make their own decisions about how to use this technology responsibly.

Conclusion

There is no doubt that Generative AI is affecting the way we think about human creativity. Companies are already testing and applying this emerging technology to promote higher levels of productivity and creativity, and at Kantar, we are already pursuing many initiatives.

But we shouldn’t forget that this technology is in many ways still in its infancy. There is important thinking still to be done, both within companies and society more broadly, about the role Generative AI can and should play in creative processes.

Fully automated creative outputs are probably not going to be the way to optimise creativity – rather, those in the creative space will need to become ever more adept at knowing how to get the best out of these tools, using the right prompts and moderation.

There are clear benefits in leveraging Generative AI to aid creative development. While the technology is new, companies need to start engaging with the possibilities now or risk being left behind, considering the role of this technology, used responsibly, to shape the most meaningful brands of tomorrow.

Get in touch to discuss the implications of Generative AI for your business and how Kantar can help.

Our view on key Generative AI tools for image and video

Text-to-image tools

There are many Generative AI tools with more being introduced every day. In the text-to-image generation space, the most popular tools are DALL-E2 (powered by OpenAI) and Midjourney - these produce the highest quality images but require a subscription for access. There are also several free tools (such as Craiyon, Stable Diffusion) that can be slower and may produce lower-quality images. These free options still give valuable insights into how these models respond to prompts.

Whether using free or paid tools, the input prompts go a long way toward determining the quality of the output, perhaps even more so than in tools like ChatGPT that produce text output. Take the two generated images below – in the first example a very simple prompt is used, while in the second a more advanced prompt is used to create a more complex output.

Image 1 prompt: “Person smiling wide and holding a blue gum pack”:

Image 2 prompt: “Street-style advertisement photo full-body shot of person in a blue jacket, smiling wide and showing his teeth, in downtown New York while holding a small blue pack of gum in a rectangular shape in his right hand and showing it to the camera like an advertisement, sunset lighting - aspect ratio 9:16 --stylize 1000”:

Text-to-video tools

While Generative AI models have become increasingly proficient in creating textual and static visual content, an obvious next challenge is to develop models that can create and edit videos from text prompts. This would enable extensive use cases in creative fields like advertising and is a field of active research. Publicly available solutions are not quite as advanced as text-to-image alternatives.

Current tools such as Synthesia and Google's Imagen video are popular, however, it is challenging to create outputs that are not just spatially consistent (like the images generated by good text-to-image tools) in each frame but also have temporal consistency across frames (i.e., the frames flow together smoothly as a video).

Consider these surreal examples of fully AI-generated beer and pizza ads – clearly something has gone wrong!

Other technical challenges include the computational costs of creating long videos (beyond a few seconds) and the lack of extensive video datasets with captions on which to train these models. However, if history is any guide, we can expect rapid advances here within the next couple of years and it’s an exciting space to watch!