If the fear of losing past brand tracking data and comparisons holds you hostage in a project that no longer meets your objectives, fear no more. Technological advancements now mean that past data won’t be lost and can easily remain comparable to your new data. In this post, we explore your different options in dealing with change in data, whether these changes are self-imposed, or COVID-19 related.

So, here’s the issue: brand tracking programmes propel on data continuity to enable comparisons through time; yet, unless they evolve, they fail to match the changing environment. One could easily get trapped in their tracker’s “Groundhog Day” unless they approach change as the enhancement it is; a chance for a deeper, richer and better story for a brand, a chance to move closer to the market reality, a chance to diagnose and predict more accurately.

With the COVID-19 pandemic forcing many sudden unplanned changes in tracking methodologies, such as any offline interviewing moving online, the timing has never been better for brands to evaluate whether their current tracking programme is really delivering what they need, and be brave enough to make a change.

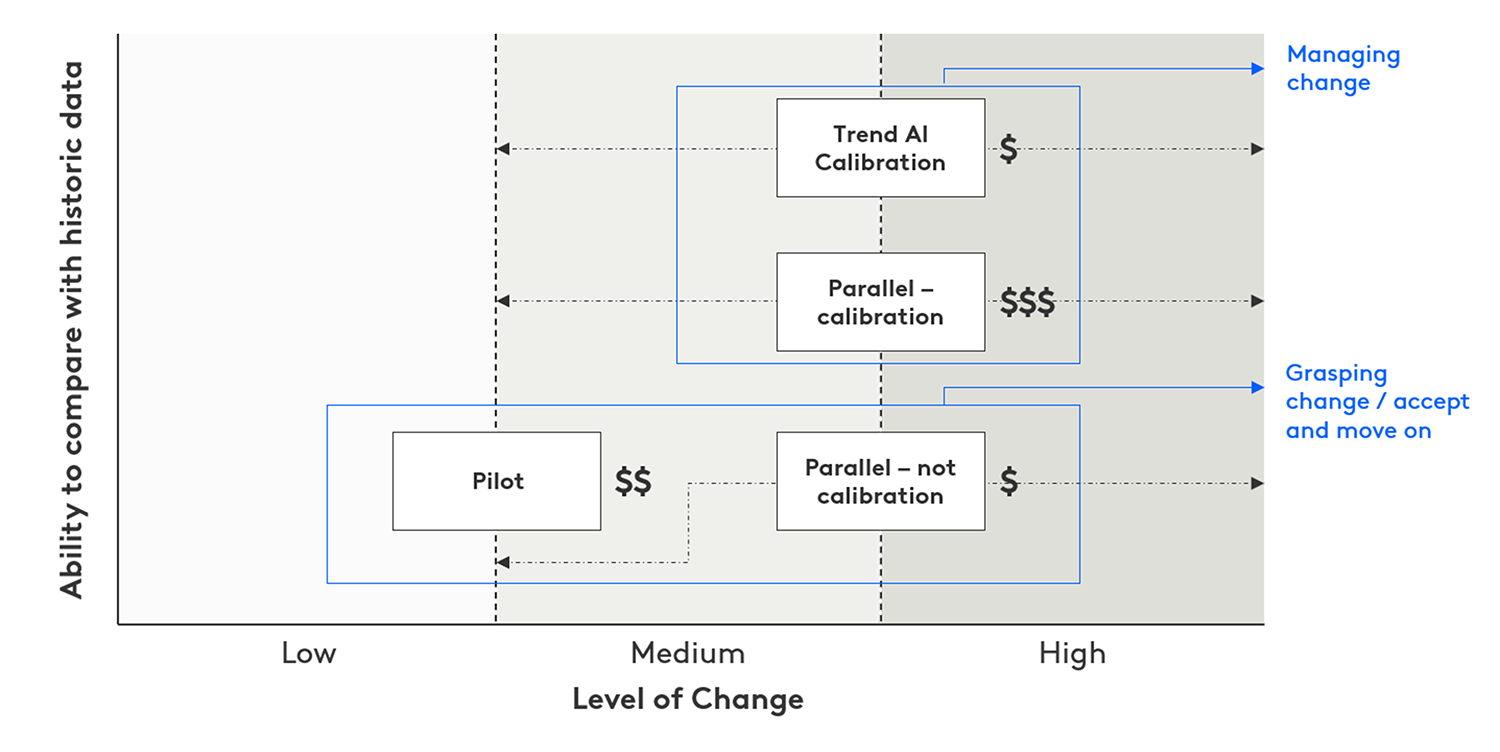

Grasping vs. managing change

These are two different things. You might be embracing change in both cases, but in the former, you accept change and move on; in the latter, you seek continuous comparison with your old brand data. Different approaches show a different type of change, and come with varying benefits.

Pilots

To evaluate the impact of minor adjustments in your brand tracker, you are likely to do a pilot. It’s a preliminary test to identify weaknesses in your new design, but equally it has the potential to increase the quality of your consumer survey. Depending on your pilot results, you might want to go back to the drawing board and repeat the process. So, clearly, time and funding limitations apply.

Parellel runs

If you are pressed for time, a parallel run (“parallel – no calibration” in the graph below) – which usually runs for one to two months – highlights changes in the two simultaneously run sets of results. Think of this as the before and after data sets. A meaningful interpretation of the data deviations here – what is making your new data different from the brand tracking data you had before? – is the step before creating new norms and benchmarks. In both scenarios, you use the old data as your compass to design the future of your tracker. You grasp the change and move forward. The attachment with the past stops there.

Building a greater rapport with the past, however, requires a calibration process, i.e. a derived weight that will allow you to adjust the data in the old programme to match the new programme. Calibration sets the gold standard in managing trend change.

Parallel runs plus calibration

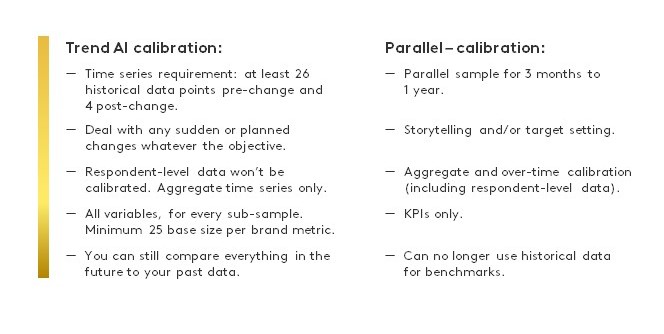

In its scientifically purist, but most strenuous form, this calibration process commences with a parallel sample (“parallel – calibration” in graph below), the length of which is defined by your objective. If your intention is to tell the story of your brand’s transformation, running a parallel run for three months will suffice. If it’s about setting targets – often linked to compensatory metrics – achieving a higher level of confidence is a must. For that, we recommend running the two sets simultaneously for a year. So, this a long and, naturally, high-priced option. Top-notch data scientists will get involved and, many measurement comparisons later, a successful calibration will result in a static ranking order of brands, but altered absolute values. The differences will be relative; the insight unchanged.

Trend AI calibration

What is described above as a laborious, not-for-the-faint-hearted weighting scheme can be also delivered – with some variation – by a new Kantar system: Trend AI calibration*. To grasp the magnitude of this tech-enabled calibration, we first need to differentiate a moving average timeline from a true signal. Metrics shift all the time, creating trends and the noise that comes with them. A true signal alerts us to non-random trend shifts more reliably and earlier than the close monitoring of a moving average timeline.

When a trend break occurs, many factors have likely contributed to it (after all, whilst modernising your tracker, why hold back?) and this fully automated solution is designed to de-construct and make sense of them all. It removes the effect of methodology change and takes note of seasonality, underlying trends, levels and intrinsic variation in each brand KPI. Instead of a parallel sample, the whole history, the movement, the old and new levels are analysed and redefined within the context of the entire time series, making its outcome more meaningful and comprehensive whilst retaining comparability with the past. Gold standard still, but cost-effective and requiring the minimum human intervention for refinement. Using advanced time series analysis and AI, Trend AI calibration reweights your past data points in your time series to the new reality with a 90% accuracy.

In other words, it’s calibration made easy. Whatever your objective, Trend AI calibration allows you to deal with change in your brand tracker whilst keeping the benefit of comparing your new data to your past data.

Here’s a top line guide of requirements and benefits for both methods of calibration.

Force majeure put our Trend AI calibration under the spotlight. In 2020, the COVID-19 pandemic forced the cessation of all face-to-face interviewing globally. Tracking programmes utilising offline methods for all or part of their sample were faced with sudden, unplanned changes in design leading to structured changes in tracking data. Using innovation to automatically calibrate the time series of any (and all) brand KPIs to gauge the effect of this methodological change became a routine task here at Kantar.

But, whatever the lure for change, crisis-linked or not, future proofing a study has always been a consideration for its owner: making it faster, more reliable, more representative – enhancement thoughts closely followed by the headache of dealing with the break in trend information. Trend AI calibration offers fast relief for that headache.

FAQs (and answers)

Ranking the most debated topics of the last few months with our clients, trend break sits comfortably in the top five. Here are some of the most frequent questions we’ve been asked:

What are the factors that trigger change?

Differences in sample and methodology are the top two. But these then trigger other changes on the scripting platform used, the proportion of x or y group of respondents recruited, the questionnaire length/structure. And possibly a change in philosophy too (“better if we focus on salience this time”), which then has implications on scale usage, quota management etc.; it’s a domino effect. Each one individually would have unlikely created a trend break, but all together can have a significant effect.

What level of trend break should I expect?

Explain first how much you are changing and how much of the above have creeped in.

I’ve merely moved from laptop to mobile, so no changes should occur, right?

Wrong: as you’re changing the device, your sample has shifted too.

I’ve shortened my questionnaire significantly, but the remaining questions are intact. No change, right?

It depends. If you are showing the unchanged initial part, it’s a likely yes. If the reduced questionnaire length meant an entire shift to mobile, there will be sample implications. Also, if your respondents have visibility of your questionnaire length in advance, their mindset will now differ too, so change is inevitable.

If I make the changes bit by bit, will it soften the blow?

No, this is an incorrect assumption. We urge you to get it done and over with; don’t drag it out.

For my calibration, would it be the old or the new data that we would adjust?

Always the past data; if you adjust the new, you’ll never stop adjusting.

Top tips for changing your brand tracking

And here are our top tips on how to best prepare for change:

- Manage your expectations: you WILL find change in the “unchanged” measures too.

- You should know in advance that scores can move in either direction, and also that this can vary by question and subsample.

- Don’t fight the trend break. Over the years, some have tried to mitigate against seeing any changes sometimes by compromising on what is optimum for the methodology to which they are moving. This is a bad idea as it never works. When the time for change has come, give it a full go wholeheartedly.

- Adjust your questionnaire for today’s distracted minds. Optimal questionnaire length and design are vital in making your change worthwhile.

- The value of a forensic diagnosis complements any calibration process or parallel run and will help you bridge the gap between old and new data. Start by looking at whether the rank order and relative position of the brands are still the same, and whether there is a decent correlation between the old and new data, even if the absolute scores have changed. Then seek to diagnose those differences on a case by case basis.

- Now is the right time to consider what other data you can access and how you can bring it together for best impact. A shorter questionnaire plus modules on top can transform the decision making in your business.

For more questions, tips, or a casual chat, please get in touch.

*Trend AI Calibration and Trend AI are sister Kantar systems. The same algorithms and the same advanced AI analytics are behind both. Once your calibration is completed, you can explore new and historical data at the same level for comparison. It’s highly recommended to use the two systems in combination so that you continue exploring your data going forward.